Built in 4 Weeks. Launched 7 Days Early. Then They Rolled It Out Across the Whole Series.

Timeline

4 weeks build

Stack

Next.js, Supabase, OpenAI API, Mixpanel, Sentry, DigitalOcean

Result

Deployed at first event, then rolled out across a series of 50–700 person events

There's a specific kind of deadline that has no flexibility built into it.

A contract signing, a regulatory filing, a product launch tied to a funding announcement - these can sometimes move. But an event? The venue is booked. The speakers confirmed. The tickets sold. The date is the date, and the software either works that day or it doesn't.

The founder running this event series knew exactly what kind of deadline he was working with. He also knew that networking at business events is, mostly, broken. You show up, you get a badge, you stand in a room with 300 people and hope you accidentally end up next to someone relevant. Most of the time you don't. Most of the time you leave with a pocket full of cards you won't follow up on and one decent conversation you had by luck near the coffee station.

His idea: fix that with AI. Match attendees before the event. Surface relevant connections. Make the "right person in the room" something the platform decides, not something you leave to chance.

Good idea. Four weeks to build it. One week buffer before go-live.

Scope First, Speed Second

The instinct with a hard deadline is to start building immediately. That's usually how you end up rebuilding.

Week one was scope. Not planning meetings, not stakeholder alignment - a scope document. What the platform needed to do for the first event: attendee profiles, AI-powered matching, a way for people to see their matches and request connections before they walked in the door. What it explicitly wasn't doing in v1: in-app messaging, a mobile native app, post-event follow-up flows. Those could come later. The event needed a working core.

The IN/OUT list wasn't bureaucracy. It was the thing that made four weeks possible.

The Matching Problem

Networking platforms that use AI for matching aren't new. Most of them are bad at it in the same way: they match on surface-level profile data, produce recommendations that feel vaguely relevant, and give users no reason to trust the output.

The difference between a match that feels algorithmic and one that feels useful is usually specificity. Not "you're both in fintech" but "you're building a payments product and she spent three years at Stripe's risk team." The first one is a category. The second one is a reason to actually walk over and introduce yourself.

Getting that specificity out of OpenAI's API meant thinking carefully about what data to collect during onboarding and how to structure the prompt. Too much context and the model rambles. Too little and the matches are generic. We iterated on this in staging - ran test profiles through, looked at the outputs, adjusted. By week two it was producing matches worth trusting.

The founder reviewed them. He knew his attendees. When he started recognizing why two people were being matched together — not just that they were — we knew the logic was right.

Thursday Demo, Week Two

Staging link went out Thursday of week two.

The founder logged in as a test attendee, filled out an onboarding profile, and saw his matches. He requested a connection. Got the confirmation state. Checked the admin view - saw the request come through, saw the match data, saw the analytics event fire in Mixpanel.

He sent one message: "This is it!"

That message matters more than it sounds. At week two, there's still time to fix things. There's still time to change a flow that isn't working, cut a feature that's taking too long, adjust the matching logic if something feels off. The Thursday demo cadence exists precisely because feedback at week two is cheap. Feedback at day-before-launch is expensive.

We had two weeks left and a clear picture of exactly what needed to happen in them.

The Hard Parts

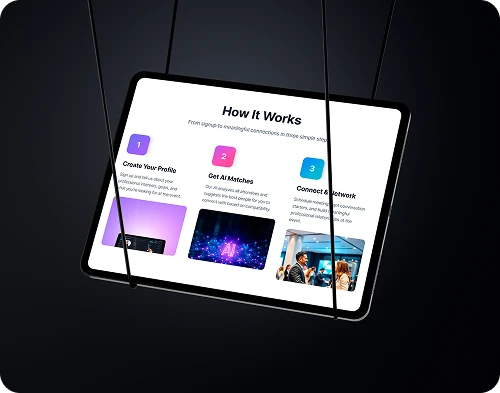

Matching is only half the problem. The other half is getting people to actually engage with their matches before the event - because a platform that shows you relevant people three hours before you walk in is less useful than one that's been priming the conversation for a week.

The onboarding flow had to be short enough that people would complete it on their phone in five minutes, and rich enough that the matching had something real to work with. That tension doesn't resolve cleanly. You make judgment calls, test them, adjust. We shipped two iterations of the onboarding before the event. Both went through staging first.

Supabase handled the real-time match updates and the connection request state cleanly. But row-level security on attendee data needed careful configuration - event organizers should see aggregate data, attendees should only see their own matches and confirmed connections, and nobody should be able to enumerate the full attendee list. Three different access patterns, all live on the same database. Get one wrong and you have a privacy problem at an event where people are handing over their professional profiles.

We didn't get it wrong. But it took the time it took.

Seven Days Early

Production deploy landed seven days before the event.

Not seven days before the deadline - seven days before the event itself. Enough time for the organizer to onboard early registrants, watch real usage data come through Mixpanel, and confirm that nothing was on fire in Sentry. It wasn't. The launch was stable.

Fifty people attended that first event. Not 700 - fifty. But the right fifty, with the right matches, and a platform that worked without a single incident on the day.

Then They Ran It Again

The real signal wasn't the first event. It was what happened after.

The organizer didn't need a rebuild for the next event. He didn't need a new scope conversation or a new timeline. He had a working platform that he understood, with a handoff doc that covered how to onboard a new event, how to review the match quality before sending connections, and what to watch in the analytics.

He ran it again. Then again. The series now runs 50-person roundtables up to 700-person conferences on the same codebase.

That's what a proper v1 looks like. Not something you throw away after the first use. Something you build on.